Core banking high availability and fault tolerance are two related but distinct properties of a core banking system. High availability refers to the system’s ability to remain operational and accessible to users for a defined proportion of time, typically expressed as a percentage uptime target such as 99.9 percent or 99.99 percent. Fault tolerance refers to the system’s ability to continue functioning correctly when one or more of its components experience a failure, without that failure causing the entire system to become unavailable or producing incorrect results.

A highly available system is not necessarily fault tolerant, and a fault tolerant system is not necessarily highly available, but in practice, modern core banking systems must achieve both properties simultaneously to meet the operational and regulatory expectations placed on them.

For payment institutions and e-money institutions operating on real-time payment rails such as SEPA Instant Credit Transfer and Faster Payments, these properties are particularly critical. Payment schemes impose strict processing time requirements, and a core banking system that becomes unavailable or produces incorrect results during payment processing can cause scheme rule violations, financial losses, and regulatory incidents. Under DORA, financial institutions are additionally required to demonstrate that their ICT systems are designed and operated with digital operational resilience as a core design requirement rather than an afterthought.

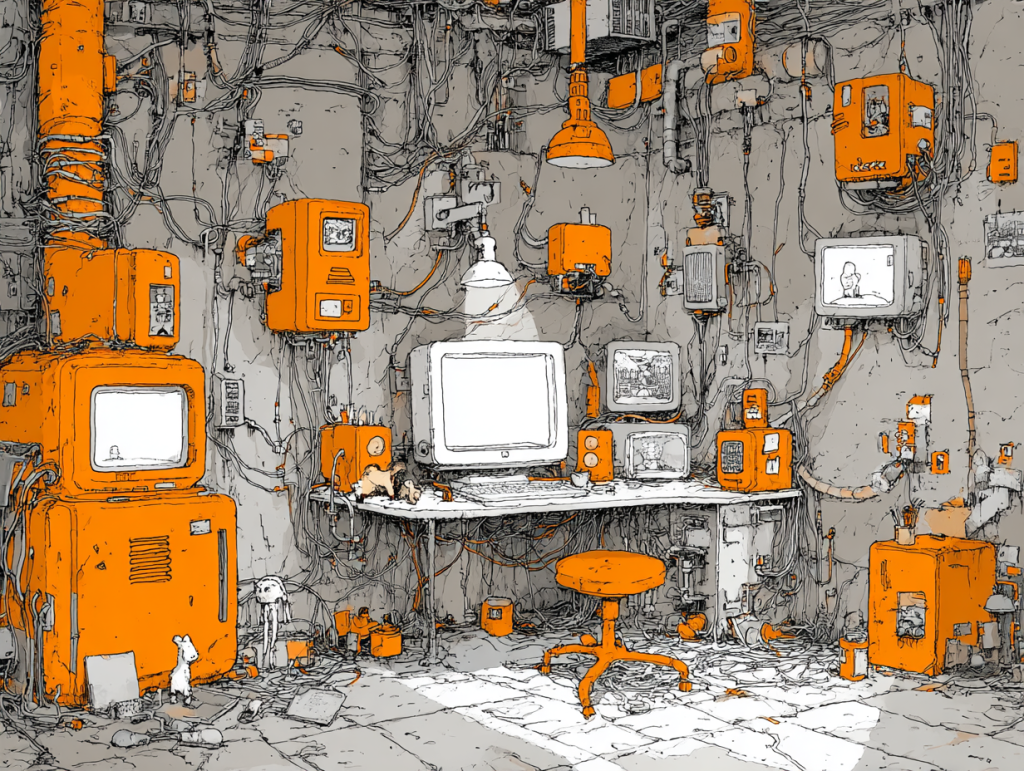

As illustrated in a typical high availability core banking architecture, the system is deployed across multiple availability zones within a cloud region, with each zone operating independent power, networking, and physical infrastructure. Each service runs as multiple instances distributed across these zones, with a load balancer distributing incoming requests across healthy instances.

A health monitoring system continuously checks the status of each instance, and if an instance fails its health check, the load balancer automatically removes it from the pool and stops routing traffic to it. The remaining instances absorb the traffic without service interruption. If an entire availability zone becomes unavailable, the instances in the other zones continue to serve requests, and the failed zone’s instances are automatically replaced in the remaining zones.

Key Takeaways: #

- High availability in core banking systems is achieved through a combination of redundant infrastructure, automated failover, load balancing, and health monitoring, designed to ensure that the system remains operational even when individual components fail

- Fault tolerance is the system’s ability to continue functioning correctly in the presence of component failures, achieved through techniques including replication, circuit breakers, graceful degradation, and stateless service design that prevent individual failures from cascading across the platform

- Under DORA, payment institutions and e-money institutions must define recovery time objectives and recovery point objectives for their critical ICT systems, conduct regular resilience testing, and demonstrate to their national competent authority that their core banking infrastructure can withstand and recover from ICT incidents including infrastructure failures, cyber incidents, and third-party service disruptions

Core banking high availability and fault tolerance are two related but distinct properties of a core banking system. Core banking high availability refers to the system’s ability to remain operational and accessible to users for a defined proportion of time, typically expressed as a percentage uptime target such as 99.9 percent or 99.99 percent. Fault tolerance refers to the system’s ability to continue functioning correctly when one or more of its components experience a failure, without that failure causing the entire system to become unavailable or producing incorrect results. A highly available system is not necessarily fault tolerant, and a fault tolerant system is not necessarily highly available, but in practice, modern core banking systems must achieve both properties simultaneously to meet the operational and regulatory expectations placed on them.

For payment institutions and e-money institutions operating on real-time payment rails such as SEPA Instant Credit Transfer and Faster Payments, these properties are particularly critical. Payment schemes impose strict processing time requirements, and a core banking system that becomes unavailable or produces incorrect results during payment processing can cause scheme rule violations, financial losses, and regulatory incidents. Under DORA, financial institutions are additionally required to demonstrate that their ICT systems are designed and operated with digital operational resilience as a core design requirement rather than an afterthought.

As illustrated in a typical high availability core banking architecture, the system is deployed across multiple availability zones within a cloud region, with each zone operating independent power, networking, and physical infrastructure. Each service runs as multiple instances distributed across these zones, with a load balancer distributing incoming requests across healthy instances. A health monitoring system continuously checks the status of each instance, and if an instance fails its health check, the load balancer automatically removes it from the pool and stops routing traffic to it. The remaining instances absorb the traffic without service interruption. If an entire availability zone becomes unavailable, the instances in the other zones continue to serve requests, and the failed zone’s instances are automatically replaced in the remaining zones.

How Core Banking High Availability Is Achieved #

Redundant infrastructure and multi-zone deployment: The foundational requirement for high availability is the elimination of single points of failure across all layers of the core banking infrastructure. A single point of failure is any component whose failure would render the entire system unavailable. In a cloud-based core banking deployment, single points of failure are eliminated by deploying each infrastructure component, including application servers, databases, message brokers, load balancers, and network gateways, with redundant instances across multiple availability zones. The failure of any single instance or any single availability zone does not cause system unavailability, as redundant instances in other zones continue to operate.

Load balancing and traffic distribution: Load balancers distribute incoming requests across multiple instances of each service, ensuring that no single instance is overwhelmed by traffic and that requests are automatically redirected to healthy instances when a failure occurs. In core banking systems, load balancing operates at multiple layers, including the network layer for routing incoming API and user traffic, the application layer for distributing processing requests across service instances, and the database layer for distributing read queries across database replicas. Cloud-native load balancers provide health check integration, automatically removing unhealthy instances from the traffic pool and adding them back when they recover.

Automated failover: Automated failover is the mechanism by which a core banking system automatically switches from a failed primary component to a standby component without requiring manual intervention. In database infrastructure, automated failover is achieved through primary-replica replication, in which a replica database maintains a continuously updated copy of the primary database’s data and is automatically promoted to primary if the primary becomes unavailable. The failover process, including the detection of the primary failure, the promotion of the replica, and the redirection of database connections, must complete within the institution’s defined recovery time objective for the database layer. For core banking systems where account balance accuracy is critical, the replication must be synchronous, meaning that each write to the primary is confirmed only after it has been replicated to the standby, ensuring zero data loss in a failover scenario.

Health monitoring and alerting: Continuous health monitoring is the mechanism by which the core banking system detects component failures and triggers automated remediation. Each service instance exposes health check endpoints that the monitoring infrastructure queries at defined intervals, assessing whether the instance is running, whether it can connect to its dependencies, and whether it is performing within acceptable response time thresholds. Instances that fail health checks are automatically removed from the load balancer pool and replaced. Monitoring data is aggregated into a centralised observability platform that provides real-time visibility into the health, performance, and resource utilisation of all system components, enabling operations teams to identify and respond to emerging issues before they affect system availability.

How Fault Tolerance Is Achieved #

Service replication and stateless design: Fault tolerance at the service level is achieved by running multiple instances of each service simultaneously and designing each service to be stateless, meaning that it does not store session data or processing state locally. Because each request contains all the information required to process it, any instance of the service can handle any request, and the failure of one instance does not affect the ability of other instances to continue processing. Stateless service design is a prerequisite for effective horizontal scaling and automated failover, as it ensures that traffic can be redistributed across instances without loss of processing context.

Circuit breakers: A circuit breaker is a fault tolerance pattern that prevents a failing dependency from causing a cascade of failures across the payment platform. When a service makes repeated requests to a dependency, such as an external payment network or a downstream microservice, and those requests consistently fail or time out, the circuit breaker opens, stopping further requests to the failing dependency for a defined period. During this open period, the service applies fallback logic, such as queuing the request for later processing or returning a defined error response, rather than continuing to make failing requests that consume resources and increase latency. After the circuit breaker timeout period, it enters a half-open state, allowing a small number of test requests through to determine whether the dependency has recovered, and closing fully if those requests succeed.

Graceful degradation: Graceful degradation is the ability of a core banking system to continue providing a reduced but functional service when some components are unavailable, rather than failing completely. In a payment platform, graceful degradation might mean continuing to process payment instructions and update account balances when the notification service is unavailable, even though customers do not receive real-time payment confirmations until the notification service recovers. The system prioritises the critical payment processing and ledger posting functions, while non-critical functions such as notification delivery, analytics processing, and report generation are queued for processing when the affected services recover.

Data replication and consistency: Fault tolerance at the data layer requires that data is replicated across multiple storage nodes so that the failure of any individual node does not result in data loss. For core banking systems, where the integrity and completeness of financial records is a regulatory requirement, data replication must be configured with appropriate consistency guarantees. Synchronous replication, in which a write is confirmed only after it has been committed to all replicas, provides the strongest consistency guarantee but introduces latency on write operations. Asynchronous replication provides lower write latency but introduces the risk of a small amount of data loss if the primary fails before the replica has received the latest writes. The appropriate replication model for each data store depends on the criticality of the data and the institution’s recovery point objective for that data layer.

High Availability, Fault Tolerance, and DORA #

DORA requires financial institutions to implement ICT systems that meet defined standards of digital operational resilience, and the core banking high availability and fault tolerance architecture of the core banking system is central to meeting these requirements. Institutions must define recovery time objectives and recovery point objectives for each critical ICT system, implement and test the technical controls required to meet those objectives, and conduct regular resilience testing to verify that the controls function as designed under realistic failure scenarios.

DORA’s resilience testing requirements include basic vulnerability assessments and scenario-based testing for all financial entities, and Threat-Led Penetration Testing for significant entities. Scenario-based resilience testing for core banking systems should include infrastructure failure scenarios such as availability zone outages, database primary failures, and message broker unavailability, as well as application-level failure scenarios such as service instance failures and circuit breaker activation. The results of resilience testing must be documented and used to drive improvements to the system’s high availability and fault tolerance architecture on an ongoing basis.

FAQ: #

What is the difference between recovery time objective (RTO) and recovery point objective (RPO)?

- Recovery time objective (RTO) is the maximum acceptable duration of downtime for a system or service following a failure or incident, measured from the point at which the failure occurs to the point at which the system is restored to operational status. Recovery point objective (RPO) is the maximum acceptable amount of data loss following a failure, measured as the time elapsed between the last successful data backup or replication and the point of failure. A core banking system with an RTO of one hour must be restorable to operational status within one hour of a failure.

- A system with an RPO of zero requires synchronous replication so that no data is lost in a failover scenario. Both metrics must be defined for each critical ICT system under DORA, and the technical controls implemented must be demonstrably capable of meeting the defined objectives under realistic failure conditions.

How does high availability differ between on-premises and cloud-based core banking deployments?

- In an on-premises deployment, high availability requires the institution to procure, install, and maintain redundant physical hardware across multiple data centre locations, manage network connectivity between those locations, and operate the monitoring and failover infrastructure independently. The capital cost of on-premises high availability infrastructure is substantial, and the time required to provision additional capacity in response to failures or demand spikes is measured in days or weeks rather than seconds.

- In a cloud-based deployment, high availability is achieved through the cloud provider’s multi-availability-zone infrastructure, with redundant instances provisioned and managed through software configuration rather than physical hardware procurement. Failover, scaling, and health monitoring are automated through cloud-native services, and additional capacity can be provisioned within seconds. For payment institutions and e-money institutions subject to DORA’s operational resilience requirements, cloud-based high availability architecture is generally more cost-effective and more reliably testable than on-premises equivalents.